AI Is Getting Smarter. Our Systems for Controlling It Are Not.

This is where I think the world is missing what is actually happening. The real gap in AI is not intelligence. It is control at the moment of execution.

The Gap Between Intelligence and Execution

AI capabilities are compounding. Control primitives are not.

That gap is becoming expensive. As AI moves out of screens and into robots, machinery, and industrial systems, the stakes change fast. The real problem is not bad output. It is bad action. Wrong text is super annoying, but wrong action is expensive. Once a bad output becomes action, the cost stops being theoretical.

The Hidden Flaw in Current Architecture

Most systems still rely on a bad assumption: software acts first, and the logs explain it afterwards. That has been survivable in low-stakes software. It is the wrong architecture for systems that can trigger real-world physical or financial consequences.

When software acts and then reports, the evidence comes after the consequence.

Post-hoc telemetry is not authority. It is explanation after action.

The Real-World Cost of Assumed Trust

When software is left to police itself, the cost of error scales violently. If the host is compromised, wrong, drifted, or manipulated, it can still trigger machinery before anyone has proven that action was actually authorised.

In the real world, one bad event can wipe out far more value than the cost of putting proper control in place. In manufacturing, downtime can run from serious money into absurd money, and in large operations the exposure can become brutal very quickly. That is before you get to damaged equipment, safety exposure, liability, and loss of trust in deployment.

One bad event can cost more than building proper control into the execution path.

The Structural Correction

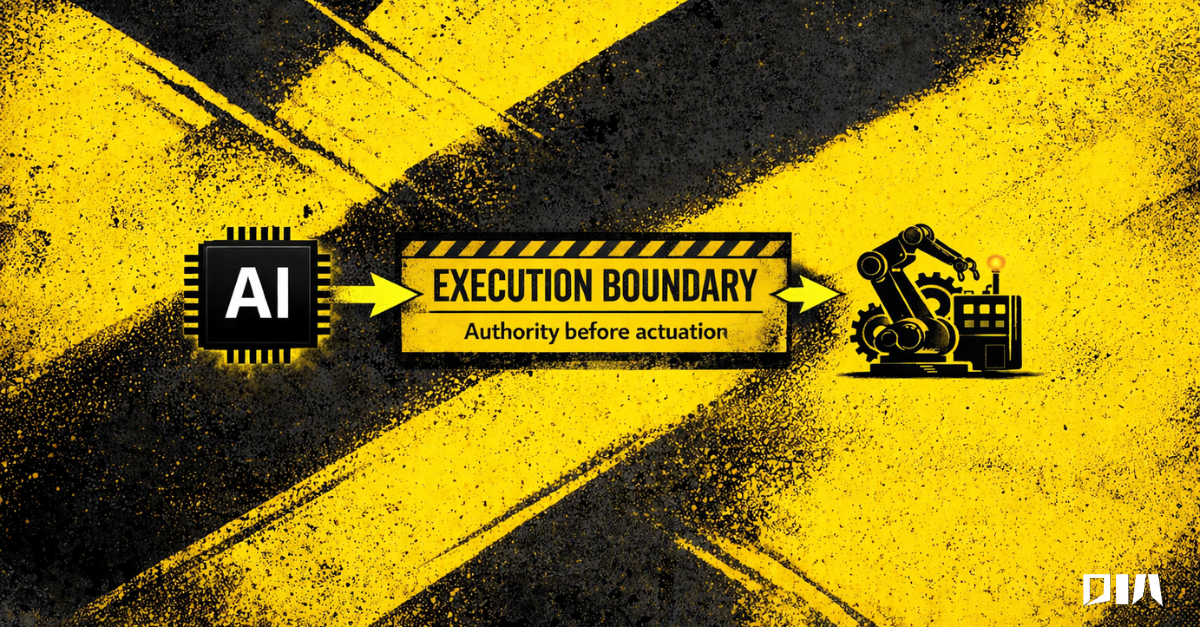

Any system that can cause real-world consequences needs an external authority boundary between intent and execution. Not another policy layer. Not another dashboard. A control point that can enforce constraints, not just describe them.

Software can advise, infer, predict, or request. But the final step to execution must be governed by something that the host system cannot bypass.

The Future of AI Governance

Smarter software is useful. It is not the same thing as proven authority. A system can look impressive, test well, and still be wrong at the exact moment that matters.

The next phase of AI won’t be won by intelligence alone.

It’ll be won by enforceable authority at the edge of reality.